What Happened to the Internet of Things?

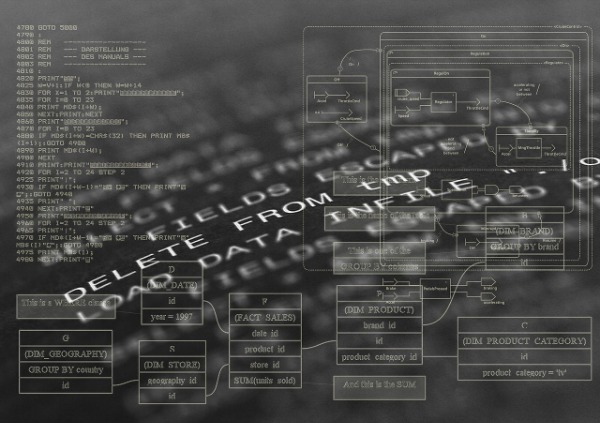

IoT applications

After writing here about how the Internet and websites are not forever, I started looking at some old posts that perhaps should be deleted or updated. With 2200+ posts here since 2006, that seems like an overwhelming and unprofitable use of my time. Plus, maybe an old post has some historical value. But I do know that there are older posts that have links to things that just don't exist on the Internet anymore.

The last post I wrote here labeled "Internet of Things" (IoT) was in June 2021. IoT was on Gartner's trends list in 2012, and my first post about IoT here was in 2009, so I thought an new update was due.

When I wrote about this in 2014, there were around 10 billion connected devices. In 2024, the number has increased to over 30 billion devices, ranging from smart home gadgets (e.g., thermostats, speakers) to industrial machines and healthcare devices. Platforms like Amazon Alexa, Google Home, and Apple HomeKit provide hubs for connecting and controlling a range of IoT devices.

The past 10 years have seen the IoT landscape evolve from a collection of isolated devices to a more integrated, intelligent, and secure ecosystem. Advancements in connectivity, AI, edge computing, security, and standardization have made IoT more powerful, reliable, and accessible, with applications transforming industries, enhancing daily life, and reshaping how we interact with technology. The number of connected devices has skyrocketed, with billions of IoT devices now in use worldwide. This widespread connectivity has enabled smarter homes, cities, and industries.

IoT devices have become more user-friendly and accessible, with smart speakers, wearables, and home automation systems becoming commonplace in households. If you have a washing machine or dryer that reminds you via an app about its cycles. or a thermostat that knows when you are in rooms or on vacation, then IoT is in your home, whether you use that term or not.

Surveying the topic online turned up a good number of things that have pushed IoT forward or that IoT has pushed forward. Most recently, I would say that the 3 big things that have pushed IoT forward are 5G and advanced connectivity, the rise of edge computing, and AI and machine learning integration:

Technological improvements, such as the rollout of 5G networks, have greatly increased the speed and reliability of IoT connections. This has allowed for real-time data processing and more efficient communication between devices.

Many IoT devices now incorporate edge computing and AI to process data locally, reducing the reliance on cloud-based servers. This allows faster decision-making, less latency, and improved security by limiting the amount of data transmitted. IoT devices have increasingly incorporated AI and machine learning for predictive analytics and automation. This shift has allowed for smarter decision-making and automation in various industries, such as manufacturing (predictive maintenance), healthcare (patient monitoring), and agriculture (smart farming).

The integration of big data and advanced analytics has enabled more sophisticated insights from IoT data. This has led to better decision-making, predictive maintenance, and personalized user experiences.

One reason why I have heard less about IoT (and written less about it) is that it has expanded beyond consumer devices to industrial applications. I discovered a new term - Industrial Internet of Things (IIoT) that includes smart manufacturing, agriculture, healthcare, and transportation, improving efficiency and productivity.

There are also concerns that have emerged. As IoT devices proliferate, so have concerns about security. Advances in cybersecurity measures have been implemented to protect data and ensure the privacy of users. The IoT security landscape has seen new protocols and encryption standards being developed to protect against vulnerabilities, with an emphasis on device authentication and secure communication.

The rollout of 5G has enhanced IoT capabilities by providing faster, more reliable connections. This has enabled more efficient real-time data processing for smart cities, autonomous vehicles, and industrial IoT applications, which can now operate at a larger scale and with lower latency.

IoT devices are now able to use machine learning and AI to learn from user behavior and improve their performance. For example, smart thermostats can learn a household’s schedule and adjust settings automatically, while security cameras can differentiate between human and non-human motion.

Edge computing has allowed IoT devices to process data locally rather than relying solely on cloud-based servers. This reduces latency and bandwidth usage, making it especially beneficial for time-sensitive applications like healthcare monitoring, industrial automation, and smart grids.

Despite the growth, the IoT market faces challenges such as chipset supply constraints, economic uncertainties, and geopolitical conflicts

What did more than a million people do this past Sunday night at 9pm ET? They tuned in on their mobile devices to

What did more than a million people do this past Sunday night at 9pm ET? They tuned in on their mobile devices to